Originally published at: https://bitmovin.com/142nd-mpeg-meeting-takeaways/

Preface

Bitmovin is a proud member and contributor to several organizations working to shape the future of video, none for longer than the Moving Pictures Expert Group (MPEG), where I along with a few senior developers at Bitmovin are active members. Personally, I have been a member and attendant of MPEG for 20+ years and have been documenting the progress since early 2010. Today, we’re working hard to further improve the capabilities and efficiency of the industry’s newest standards, while exploring the potential applications of machine learning and neural networks.

The 142nd MPEG Meeting – MPEG issues Call for Proposals for Feature Coding for Machines

The official press release of the 142nd MPEG meeting can be found here and comprises the following items:

- MPEG issues Call for Proposals for Feature Coding for Machines

- MPEG finalizes the 9th Edition of MPEG-2 Systems

- MPEG reaches the First Milestone for Storage and Delivery of Haptics Data

- MPEG completes 2nd Edition of Neural Network Coding (NNC)

- MPEG completes Verification Test Report and Conformance and Reference Software for MPEG Immersive Video

- MPEG finalizes work on metadata-based MPEG-D DRC Loudness Leveling

In this report, I’d like to focus on Feature Coding for Machines, MPEG-2 Systems, Haptics, Neural Network Coding (NNC), MPEG Immersive Video, and a brief update about DASH (as usual).

Feature Coding for Machines

At the 142nd MPEG meeting, MPEG Technical Requirements (WG 2) issued a Call for Proposals (CfP) for technologies and solutions enabling efficient feature compression for video coding for machine vision tasks. This work on “Feature Coding for Video Coding for Machines (FCVCM)” aims at compressing intermediate features within neural networks for machine tasks. As applications for neural networks become more prevalent and the neural networks increase in complexity, use cases such as computational offload become more relevant to facilitate widespread deployment of applications utilizing such networks. Initially as part of the “Video Coding for Machines” activity, over the last four years, MPEG has investigated potential technologies for efficient compression of feature data encountered within neural networks. This activity has resulted in establishing a set of ‘feature anchors’ that demonstrate the achievable performance for compressing feature data using state-of-the-art standardized technology. These feature anchors include tasks performed on four datasets.

9th Edition of MPEG-2 Systems

MPEG-2 Systems was first standardized in 1994, defining two container formats: program stream (e.g., used for DVDs) and transport stream. The latter, also known as MPEG-2 Transport Stream (M2TS), is used for broadcast and internet TV applications and services. MPEG-2 Systems has been awarded a Technology and Engineering Emmy® in 2013 and at the 142nd MPEG meeting, MPEG Systems (WG 3) ratified the 9th edition of ISO/IEC 13818-1 MPEG-2 Systems. The new edition includes support for Low Complexity Enhancement Video Coding (LCEVC), the youngest in the MPEG family of video coding standards on top of more than 50 media stream types, including, but not limited to, 3D Audio and Versatile Video Coding (VVC). The new edition also supports new options for signaling different kinds of media, which can aid the selection of the best audio or other media tracks for specific purposes or user preferences. As an example, it can indicate that a media track provides information about a current emergency.

Storage and Delivery of Haptics Data

At the 142nd MPEG meeting, MPEG Systems (WG 3) reached the first milestone for ISO/IEC 23090-32 entitled “Carriage of haptics data” by promoting the text to Committee Draft (CD) status. This specification enables the storage and delivery of haptics data (defined by ISO/IEC 23090-31) in the ISO Base Media File Format (ISOBMFF; ISO/IEC 14496-12). Considering the nature of haptics data composed of spatial and temporal components, a data unit with various spatial or temporal data packets is used as a basic entity like an access unit of audio-visual media. Additionally, an explicit indication of a silent period considering the sparse nature of haptics data, has been introduced in this draft. The standard is planned to be completed, i.e., to reach the status of Final Draft International Standard (FDIS), by the end of 2024.

Neural Network Coding (NNC)

Many applications of artificial neural networks for multimedia analysis and processing (e.g., visual and acoustic classification, extraction of multimedia descriptors, or image and video coding) utilize edge-based content processing or federated training. The trained neural networks for these applications contain many parameters (weights), resulting in a considerable size. Therefore, the MPEG standard for the compressed representation of neural networks for multimedia content description and analysis (NNC, ISO/IEC 15938-17, published in 2022) was developed, which provides a broad set of technologies for parameter reduction and quantization to compress entire neural networks efficiently.

Recently, an increasing number of artificial intelligence applications, such as edge-based content processing, content-adaptive video post-processing filters, or federated training, need to exchange updates of neural networks (e.g., after training on additional data or fine-tuning to specific content). Such updates include changes of the neural network parameters but may also involve structural changes in the neural network (e.g., when extending a classification method with a new class). In scenarios like federated training, these updates must be exchanged frequently, such that much more bandwidth over time is required, e.g., in contrast to the initial deployment of trained neural networks.

The second edition of NNC addresses these applications through efficient representation and coding of incremental updates and extending the set of compression tools that can be applied to both entire neural networks and updates. Trained models can be compressed to at least 10-20% and, for several architectures, even below 3% of their original size without performance loss. Higher compression rates are possible at moderate performance degradation. In a distributed training scenario, a model update after a training iteration can be represented at 1% or less of the base model size on average without sacrificing the classification performance of the neural network. NNC also provides synchronization mechanisms, particularly for distributed artificial intelligence scenarios, e.g., if clients in a federated learning environment drop out and later rejoin.

Verification Test Report and Conformance and Reference Software for MPEG Immersive Video

At the 142nd MPEG meeting, MPEG Video Coding (WG 4) issued the verification test report of ISO/IEC 23090-12 MPEG immersive video (MIV) and completed the development of the conformance and reference software for MIV (ISO/IEC 23090-23), promoting it to the Final Draft International Standard (FDIS) stage.

MIV was developed to support the compression of immersive video content, in which multiple real or virtual cameras capture a real or virtual 3D scene. The standard enables the storage and distribution of immersive video content over existing and future networks for playback with 6 degrees of freedom (6DoF) of view position and orientation. MIV is a flexible standard for multi-view video plus depth (MVD) and multi-planar video (MPI) that leverages strong hardware support for commonly used video formats to compress volumetric video.

ISO/IEC 23090-23 specifies how to conduct conformance tests and provides reference encoder and decoder software for MIV. This draft includes 23 verified and validated conformance bitstreams spanning all profiles and encoding and decoding reference software based on version 15.1.1 of the test model for MPEG immersive video (TMIV). The test model, objective metrics, and other tools are publicly available at https://gitlab.com/mpeg-i-visual.

The latest MPEG-DASH Update

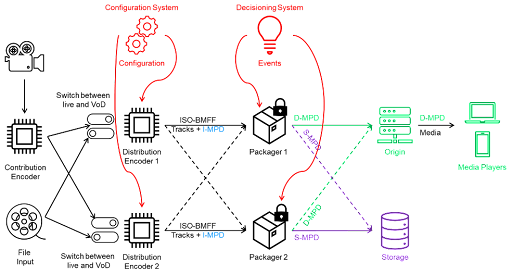

Finally, I’d like to provide a quick update regarding MPEG-DASH, which has a new part, namely redundant encoding and packaging for segmented live media (REAP; ISO/IEC 23009-9). The following figure provides the reference workflow for redundant encoding and packaging of live segmented media.

The reference workflow comprises (i) Ingest Media Presentation Description (I-MPD), (ii) Distribution Media Presentation Description (D-MPD), and (iii) Storage Media Presentation Description (S-MPD), among others; each defining constraints on the MPD and tracks of ISO base media file format (ISOBMFF).

Additionally, the MPEG-DASH Break out Group discussed various technologies under consideration, such as (a) combining HTTP GET requests, (b) signaling common media client data (CMCD) and common media server data (CMSD) in a MPEG-DASH MPD, (c) image and video overlays in DASH, and (d) updates on lower latency.

An updated overview of DASH standards/features can be found in the Figure below.

The next meeting will be held in Geneva, Switzerland, from July 17-21, 2023. Further details can be found here.

Click here for more information about MPEG meetings and their developments.

Have any thoughts or questions about neural networks or the other updates described above? Check out Bitmovin’s Video Developer Community and join the conversation!

Looking for more info on video streaming formats and codecs? Here are some useful resources: